|

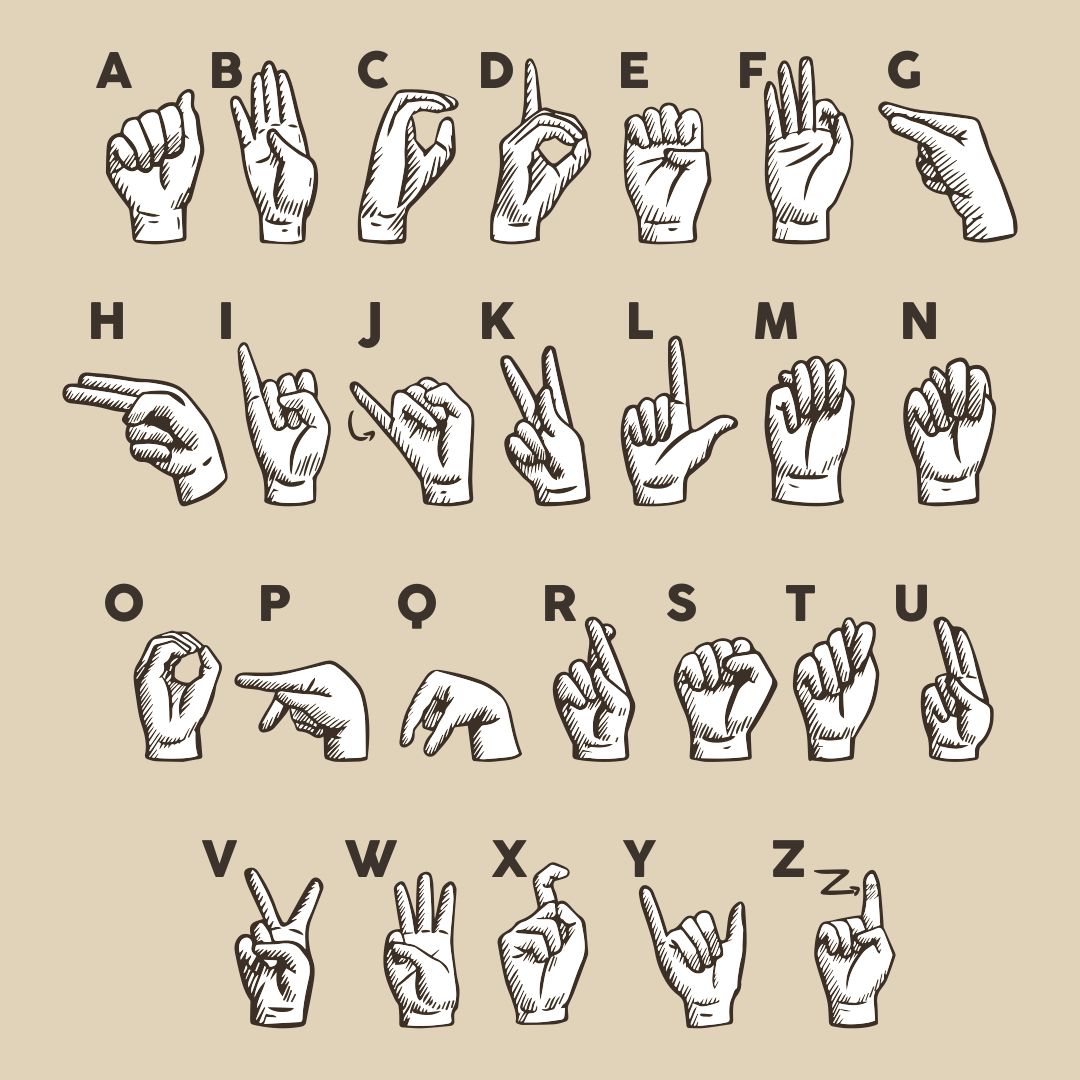

The MYO armband returned Inertial Measurement Unit (IMU) signals indicating the forearm direction and electromyographic (EMG) signals indicating the hand shape. utilized the MYO armband to build a dataset from nine participants. Due to the restricted arm movements of sign gestures in the alphabet, hand shapes are important distinguishing factors for different letters. In wearable sensors-based recognition, commercial devices like the armband, smartwatch, and data glove provide convenient experimental applications. Dawod & Chakpitak built a dataset of dynamic signs with Kinect and achieved high accuracy by Random Decision Forest (RDF) and Hidden Conditional Random Fields (HCRF) classifiers. Chong & Lee used Leap Motion to recognize 26 letters and 10 digits. applied Webcam to collect 26 signs and achieved up to 95% recognition rate. On dynamic gesture recognition, Thongtawee et al. estimated the coordinates of hand joints for classification and achieved 99.39%, 87.60%, and 98.45% on the above-mentioned three datasets, respectively. With the fingerspelling A dataset, Rajan & Leo applied the skin color-based YCbCr segmentation method to extract the hand shape. Nguyen & Do extracted Histogram of Oriented Gradients (HOG) and Local Binary Pattern (LBP) features from images and applied SVM and CNN architecture models to achieve the result of 98.36% without considering signer independence.

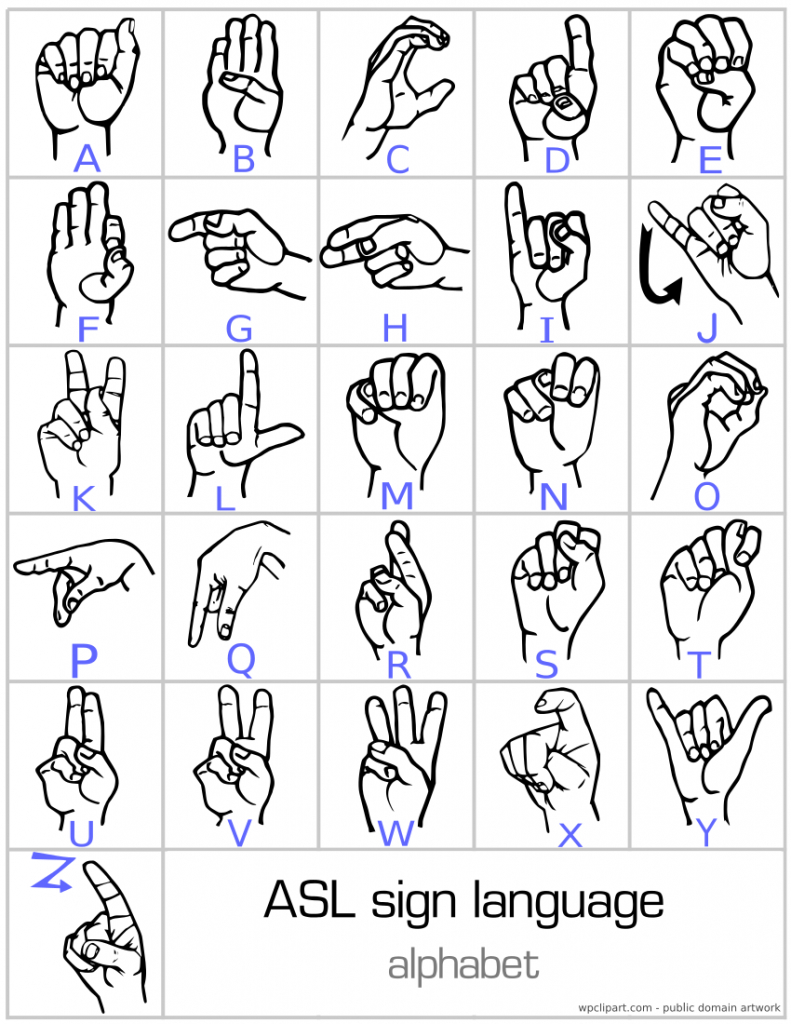

Random Forest (RF), Support Vector Machine (SVM), K-Nearest Neighbors (KNN), and Convolutional Neural Network (CNN) models were evaluated, which produced the highest accuracy of 97.01% on signer dependent and 76.25% on signer independent evaluation. applied a hybrid discrete wavelet transform-Gabor filter for feature extraction from images. and Nguyen & Do both did the classification on the Massey dataset. built a capsule-based Deep Neural Network (DNN) for the sign gestures recognition of the ASL Alphabet dataset and achieved a relatively high classification accuracy of 99%. Studies treating hand gestures as static always ignore these two letters to become 24 classification. Most of the gestures can be regarded as static without involving any movement of the forearm except for the letters “j” and “z”. The vision-based approach utilizes RGB or RGB-D camera to catch the static gestures or dynamic movements of the hand. The hand gesture recognition of this finite corpus with 26 possible classes has been conducted in prior work by the following two main classification mechanisms: vision-based and wearable sensors-based recognition. The proposed method has the potential to recognize more hand gestures of sign language with highly reliable inertial data from the device. Moreover, the proposed two sequence recognition models achieve 55.1%, 93.4% accuracy in word-level evaluation, and 86.5%, 97.9% in the letter-level evaluation of fingerspelling.

The study reveals that the classification model achieves an average accuracy of 74.8% for dynamic ASL gestures considering user independence. In fingerspelling recognition, we explore two kinds of models: connectionist temporal classification and encoder-decoder structured sequence recognition model.

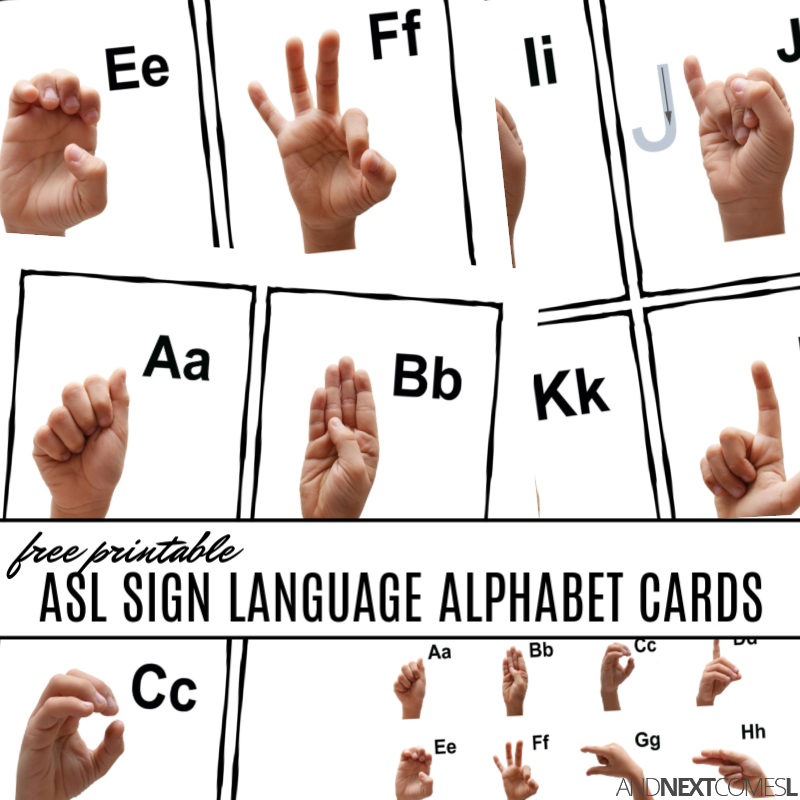

In this work, time and time-frequency domain features and angle-based features are extracted from the raw data for classification with convolutional neural network-based classifiers. The purpose of this research is to classify the hand gestures in the alphabet and recognize a sequence of gestures in the fingerspelling using an inertial hand motion capture system. In American sign language, twenty-six special sign gestures from the alphabet are used for the fingerspelling of proper words. Sign language is designed as a natural communication method for the deaf community to convey messages and connect with society.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed